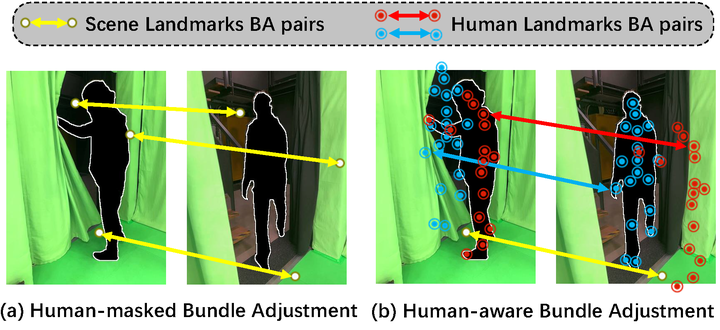

The framework overview of HumanBA.

The framework overview of HumanBA.

Abstract

Recovering global human and camera motion from monocular video is essential for world-coordinate human reconstruction but remains challenging due to entangled motions in image space. Traditional SLAM methods estimate monocular camera motion but fail in scenes dominated by foreground objects such as humans. A common workaround is to mask out dynamic objects, yet this approach becomes brittle when humans occupy most of the view or the background is too noisy, leading to unstable tracking and loss of constraints. This paper takes the opposite stance and reintegrates human motion as informative landmarks. We introduce HumanBA, a human-aware bundle adjustment framework that transforms dynamic humans into usable constraints via motion decoupling. HumanBA subtracts the human-induced component from observed joint trajectories, isolating a camera-induced (pseudo-static) component that can be safely incorporated into bundle adjustment alongside background features. To mitigate noise in global human estimates, HumanBA applies motion refinements and motion-aware reliability weighting. Across EMDB and SLOPER4D benchmarks, we show consistent improvements on camera pose estimation and reduce global human reconstruction error, demonstrating the benefits of treating humans as dynamic yet informative landmarks.